09 Mar 2023

Hello, fictive reader. Blog’s been quiet for years.

Working in government has significant chilling effects on public speech, but suffice to say Canberra has been an (unexpected) blessing in terms of work. We’ve been here about seven years and it’s been a great journey in design, tech, climate – and consultancy scale-ups.

This year looks a bit different.

I finished up at Pragma and am taking the year to study ✨theology✨ (no, not astrology!). The Jesus kind.

Getting to the point of ‘quit your job and study the bible’ is a bit of a journey. More to be said than is said here, so do reach out if you would like to chat!

You’re studying what now?

I’ve enrolled full time at Ridley College, based in Melbourne, but studying online.

Theology is studying God and religious belief - in this case, consistent with Christian faith. Ridley are ‘reformed evangelical’ and (self-describedly) world class theological education. The academic rigour is real (more below). I’m just a few weeks in now and it has been wonderful learning from their staff. I know only a few people who have studied there, but it’s been widely recommended as the best distance option. (There are, of course, caveats on distance that are in some measure predictable in our COVID-weary world - ask and I’ll tell you!)

Too jargony? Parse ‘reformed/evangelical’ as ‘a focus on how God has spoken to us through the bible, and historical Jesus’ life, death and resurrection’.

Can’t you just read the bible?

(Or, my fav variant on this question: don’t you already know what is in the bible?)

One of the New Testament letter writers sets the frame for this: “the word of God is living and active, sharper than any two-edged sword, piercing to the division of soul and of spirit, of joints and of marrow, and discerning the thoughts and intentions of the heart.” (Heb 4:12)

There’s an imperative to read again, and again. But it’s a fair question. Full-time study isn’t the ‘real world’, and isn’t forever. In day-to-day Christian life, we read the words of God, and probably use a bit of what we’ve heard in faith settings as a lens to understand how they fit tighter.

This sentiment is pretty familiar: “…some people today believe the [writers of gospels] took divine dictation: they were merely stenographers, the secretaries of the Holy Spirit.”

Part of the humanities bag-of-tricks is considering context and form. Part of the rigour is piecing together what smart people, who wrestle with the mess/age of history, know about the academic reality of how we get these texts, and beliefs.

The reality is real people wrote the words of life, to particular people at a particular time.

What’s the driver?

A number of things, best told in person, got us to this decision point in late January.

This is a strange choice. But the bible describes a strange people. The main game is to understand God’s salvation without dull eyes, ears or heart, to persuade about Jesus in the Spirit’s power.

I am trusting God will graciously use this year for greater understanding and transformation. Now, ‘knowledge puffs up’. If you talk to God, please ask it doesn’t give selfish pride but the fruit of the Spirit - love, joy, peace, patience, kindness, goodness, faithfulness, gentleness and self-control.

People I’ve told this have, broadly, responded with “you do you, you’ve got to look after yourself” (self-actualisation, I suppose?) or perhaps presumed it is a bit of a nerding-out exercise, maybe lacking in rigour. Others still wonder if it is credentialling - accordingly, I will be bored.

To be honest, I am grappling with the firehose of content right now. Perhaps common to new endeavours, perhaps God already answering that prayer about pride.

While humanities study isn’t new, theological education, and full-time study, is. Transitioning from 4-day-a-week work to a full-time course load has also been genuinely difficult to keep up with. Kids of course doesn’t pause, started a new school this year, have their own full little lives, and the rest of life remains busy and (a little unpredictably) full.

But God is not far off, and so I pray in the Spirit for perseverance in studies this year!

–

12 Jul 2018

A while back I stumbled across a FootprintDNS host on a few Office 365 services, and wondered what it was up to. A few others were wondering the same. There didn’t seem to be an answer out there.

We’ve been looking into using Azure Traffic Manager for load balancing and in particular their Real User Monitoring feature. In the process of auditing how this works (noting it uses client-side JS), it became clear that it’s using the footprintdns.com for tracking.

https://www.atmrum.net/client/v1/atm/fpv2.min.js is worth a read. It uses .clo.footprintdns.com subdomains for tracking.

Ostensibly the purpose is to learn about real user latency - which means that anecdotal reports of this service taking a while to load are sensible, and in line with its purpose!

For those wondering if this is blockable, it seemingly would have no impact on end-user experience, but of course in aggregate means your users aren’t going to have Traffic Manager balance requests toward lower latency endpoints. Probably not a big issue if you are security conscious.

If you would like to try something more targeted – on the off chance there are other, undocumented uses of this domain – you could instead block atmrum.net (and subdomains) which hosts the loader script for Azure Traffic Manager’s Real User Monitoring.

27 Jan 2017

Finally got around to playing with LetsEncrypt this evening. I was naively copy-pastaing my way through a tutorial when I ran up against this error.

Failed authorization procedure. www.example.com (tls-sni-01): urn:acme:error:tls :: The server experienced a TLS error during domain verification :: Failed to connect to 104.27.178.157:443 for TLS-SNI-01 challenge, example.com (tls-sni-01): urn:acme:error:tls :: The server experienced a TLS error during domain verification :: Failed to connect to 104.27.178.157:443 for TLS-SNI-01 challenge

The IP in the error belongs to Cloudflare, which was already active and providing ‘flexible’ (i.e. illusory) SSL. [1]

One way to fix this is to make a DNS change to point directly at your origin server (your own IP), instead of the CDN, while you provision the cert. But half the point of LetsEncrypt is that you get readily automated and cycleable certificates, which big scary DNS changes would get in the way of. Not to mention that DNS changes are typically slow/multistage/relatively hard to script.

Thankfully, LetsEncrypt are smart folks and have another option.

Enter --webroot.

sudo letsencrypt --apache -d example.com -d www.example.com --webroot --webroot-path=/var/www/html/ --renew-by-default --agree-tos

> Too many flags setting configurators/installers/authenticators 'apache' -> 'webroot'

Did I mention I was using Apache?

The copy-paste tutorial I was following used the --apache switch as a way of, I guess, automagically configuring a virtualhost. I broadly trust that LetsEncrypt will have saner defaults than whatever other DigitalOcean tutorial I used to provision the box in the first place, so that seemed like a good idea. Only, of course, it wasn’t compatible with the --webroot switch.

But, eventually, this did:

sudo letsencrypt --installer apache -d example.com -d www.example.com --webroot --webroot-path=/var/www/html/ --renew-by-default --agree-tos

Seems like --apache is shorthand for --installer apache, which is somehow sufficiently differentiated from --webroot to not throw errors. Don‘t know what I’m doing deeply enough to file a bug yet so throwing this blog up for a hopefully searchable solution in the meantime.

–

- This has been written up dozens of times, but to summarise the issue:

Browser talks to CDN talks to Server.

Browser to CDN is secure. CDN to Server is insecure.

So we’re trying to fix that last mile (which, of course, still leaves the “CloudFlare can MITM half the Internet” issue… but that’s a bit beyond my immediate threat model).

11 Jul 2016

Need to see what’s running?

I think Optimizely might’ve done something to make this tricker as an optional project setting, but it’s handy to be able to debug what’s running and possible splits.

Object.keys(optimizely.allExperiments).map(function(item){

item = optimizely.allExperiments[item];

if (!item.enabled) return;

console.log([item.name,'\n',item.variation_ids.map(function(v){

return "\t" + item.variation_weights[v]/1000 + "%: " + optimizely.allVariations[v].name;

})].join(''))

})

Paste in console.

13 Dec 2015

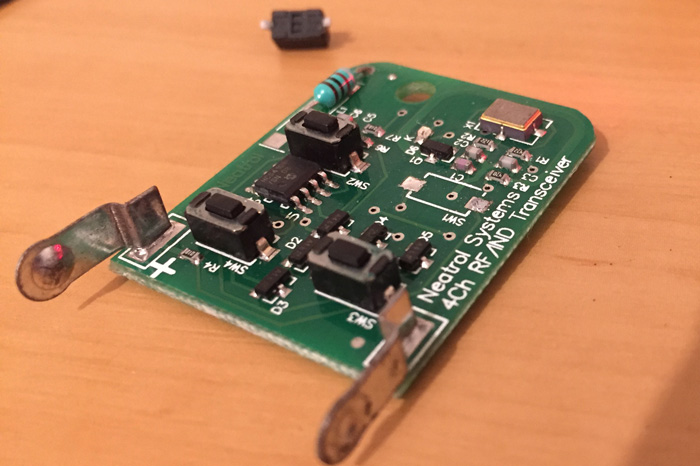

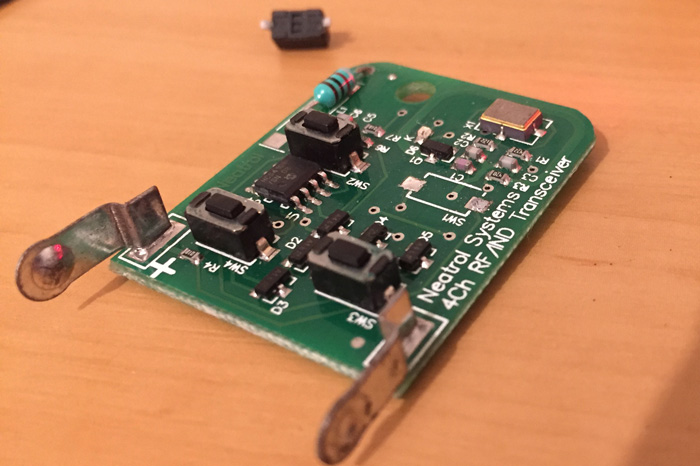

Our garage remote died recently. We subbed the battery, and nothing improved. Stood closer, nothing improved. Sporadic successes were very-much outweighed by failures.

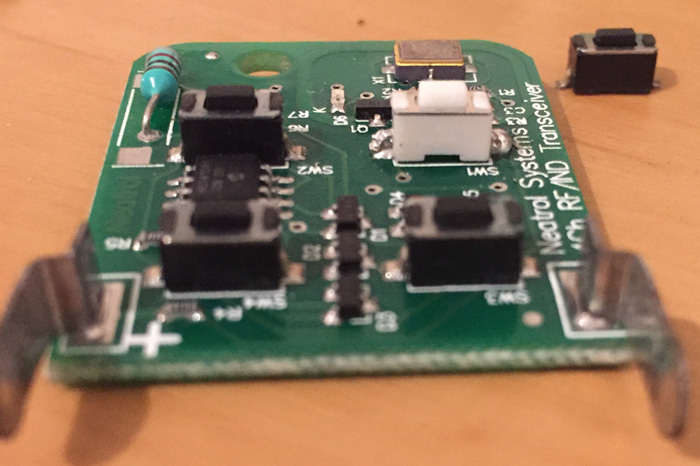

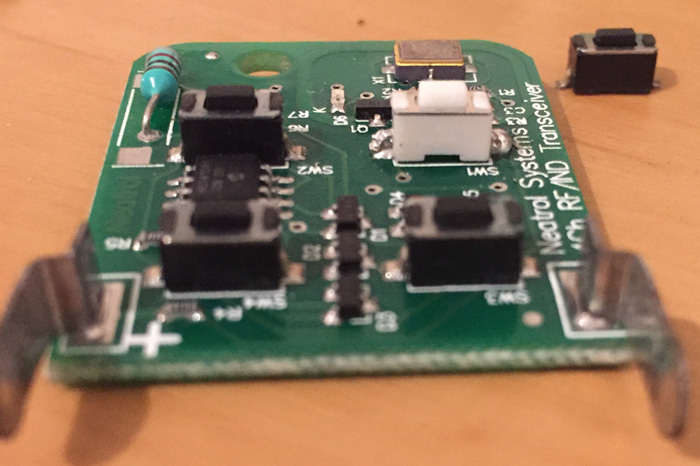

The base station/”receiver” (it’s actually a tranceiver, I think? If you want to learn more about this than I did, KeyLoq would be a good starting point) in our little block of four has been known to have some problems, but the sporadic failures and our pretty comical attempts to hold the remote at different angles, out windows, bashed-sideways, bashed-frontways, etc., were yielding poorer and poorer results.

Anyway, an afternoon prodding the thing got us to “oh the button’s probably dead”. So much for those new batteries.

At least in our situation, a four-button remote is a pretty useless thing - there’s only one gate to control. Turns out the numbering on the front of these things doesn’t even line up with the PCB - we hit “2”, which always confused me no end, but this is really pressing button 1 on the circuit.

Button 1, in this case, was doing nothing at all.

Replacement time!

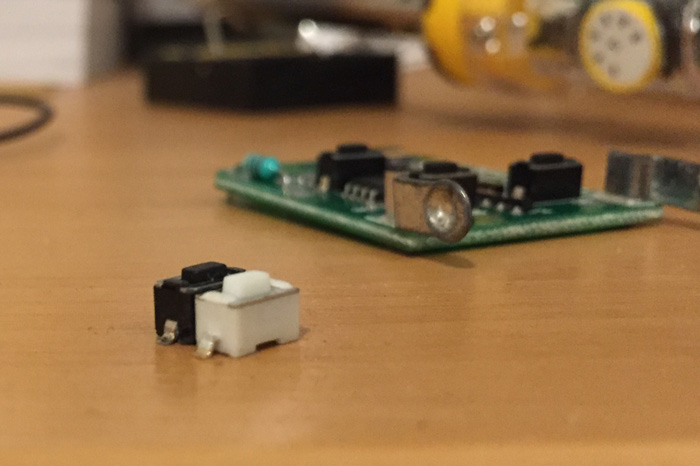

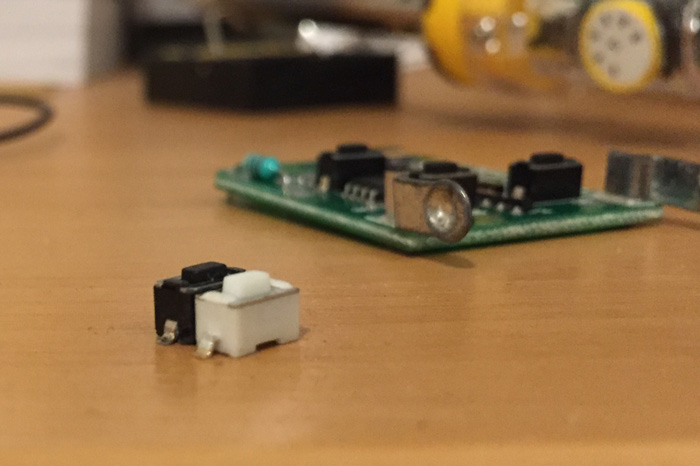

The PCB’s pretty generously spaced, probably because no-one wants a remote so tiny it gets lost. The surface mount buttons are pretty easy to work with. I ordered this pack of 20 buttons from an eBay seller (described as a “20pcs 6x3x4.3mm SMT SMD Momentary Micro Tact Tactile Push Button Switch” in the very likelihood the listing has vanished by the time you’re reading this… eBay, stop the bitrot!) for about $5 having measured up the button as being, well, vaguely that size. The buttons on my remote were black and these were white, but hey, it’s behind a piece of rubber anyway. If anyone needs 19 SMD buttons and is in Sydney, holler at me - they’re probably in a drawer somewhere.

I realised while I was popping off the old button and soldering on the new that I actually didn’t need to order any buttons at all, of course.

If you, like me, only use a single of your four remote buttons… just swap the bad one for a good one! My soldering is shocking but the original SMD work was pretty tidy and easy to get things on and off. The good news is it’s a spacious enough board you probably don’t need to worry too much about melting the wrong thing.

Anyway, yay, working remotes again!